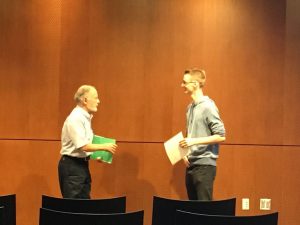

My MSc student Qinghong (Jackie) Xu successfully defended her Master’s thesis. Congratulations!

Jackies thesis is titled “Compressive Imaging with Total Variation Regularization and Application to Auto-calibration of Parallel Magnetic Resonance Imaging”. It contains a novel (and technical) theoretical analysis of TV regularization in compressed sensing, and a new method for auto-calibration in parallel MRI. Stand by for the paper later this year!